ChatGPT is well known as an AI tool capable of responding to questions, producing essays and simple codes. However, Microsoft has just proved that the bot’s capacities go beyond generating intelligible text responses to natural language prompts. The famous AI tool is not only able to participate in interactions between humans and robots but to use sensor data to program robot operations.

Recently, the research team at Microsoft conducted a study to “see if ChatGPT can think beyond the text, and reason about the physical world to help with robotics tasks.” On Monday, February 20, 2023, the team published a technical paper that provides a set of design principles that language models can follow to complete robotics tasks. This way, the researchers have proved that ChatGPT is indeed capable of instructing robots but with certain limitations.

Why can’t we communicate with robots?

Wouldn’t it be superb if we could interact with robots in human language. Just imagine giving your home robot instructions to make the bed for you, vacuum the floor, or make you some coffee, and all the actions are completed instantly. While this may not be quite impossible, there is a significant obstacle – human language.

While language is the most intuitive way in which humans express themselves; however, it’s not so for robots. They are, at least for the time being, incapable of grasping and responding to human language. Thus, to interact and control them, we need hand-written code.

This is precisely the gap the Microsoft research team attempted to bridge. The researchers have been investigating ways to use ChatGPT, a new AI language model from OpenAI, to change this situation and enable realistic human-robot interactions.

But why ChatGPT?

Being a language model trained on large bodies of text and human interactions, ChatGPT can produce coherent and grammatically sound answers to a variety of prompts and inquiries. Knowing its language and coding capacity, the researchers wanted to see whether it’s able to perceive the physical world and thus assist with robotic tasks.

The primary goal was to facilitate human-robot interaction, without the need for learning coding language. Normally, this involves specific challenges. The main one was teaching the AI tool to solve problems taking into account physics laws and operating environment context.

The results were surprising. The team discovered that ChatGPT capacities turned out to be astonishing, as it can handle a lot of things on its own. However, it lacks independence as it needs human help and supervision. In the technical paper, the research team detailed a set of principles that could be implemented for guiding language models towards resolving robotic tasks. Those principles encompass high-level API (Application Programming Interface), specific prompting structures, and human feedback through text.

How can ChatGPT overcome challenges in robotics?

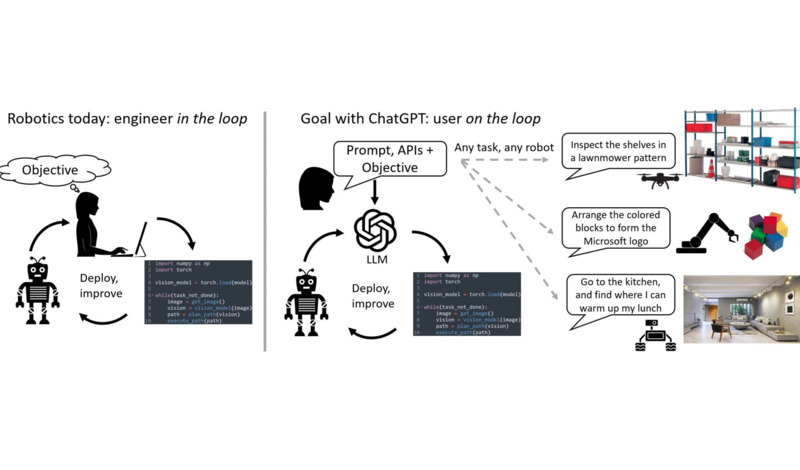

Presently, the interaction with robots starts with an engineer or technical user who translates the task requirements into the code that the robot can understand. To keep the interaction going or correct the robot’s behavior, the engineer needs to “sit in the loop,” i.e., write additional code and specifications. The whole process is slow as a user has to write low-level code, pricey because it requires experts in robotics, and inefficient since it demands multiple interactions to get things done adequately.

Source: Microsoft

OpenAI ChatGPT unravels a novel robotics model that enables a non-technical user to “sit in the loop,” offering effective feedback to the large language model (LLM). At the same time, it supervises the robot’s performance. By following the set of principles, ChatGPT generates code for scenarios that robots can understand. The knowledge of LLM is then implemented to control robots when performing a bunch of different tasks.

The Microsoft research team demonstrated numerous instances of ChatGPT resolving robotics conundrums as well as sophisticated robot actions in the manipulation, aerial, and navigation domains.

ChatGPT in action

During the study, ChatGPT got a chance to control an actual drone. The AI tool turned out to be an outstandingly visceral language-based interface between the robot and the user. Whenever the instructions were unclear or ambiguous, ChatGPT asked for clarification. The ultimate result was writing code structures for the drone, including zig-zag motions to investigate the shelves.

Source: Microsoft

The team also employed ChatGPT to simulate industrial inspection with a Microsoft AirSim simulator. The bot operated the drone precisely by understanding the user’s high-level intent and geometrical signals.

Extra prompts for complicated tasks

In addition to controlling a drone, ChatGPT got an opportunity to manipulate a robot’s arm. By implementing conversational feedback, researchers taught the bot to combine the initially offered APIs into more complicated high-level functions that ChatGPT coded on its own. It was able to do actions such as stacking blocks by logically connecting the acquired skills.

Additionally, ChatGPT was given the task of constructing the Microsoft logo out of wooden blocks. On that occasion, the model provided an intriguing example of connecting the textual and physical domains. Besides being able to retrieve the logo from its internal knowledge base, it was also able to “illustrate” the logo as SVG code.

Next, ChatGPT was prompted to create an algorithm that would allow a drone to fly across space and avoid colliding with various objects. After being informed the drone has a forward-facing distance sensor, the bot immediately coded the majority of the algorithm’s fundamental elements. This assignment demanded additional prompts from humans, but ChatGPT managed to create localized code improvements by implementing language feedback.

Bringing robots to the real world

Due to the limitations in interactions, robots still belong to scientific labs. With this study, the Microsoft research team strived to bring the concept of robotics to a wider audience. The team is of the opinion that language-based robotics manipulation will be essential for taking robotics out of the labs someday.

However, it should be noted that ChatGPT outputs are not supposed to be deployed on robots without prior analysis. The team invites users to exploit the power of simulations to assess the algorithms prior to deploying them in the real world. Of course, it can’t be overstated that taking safety precautions is a must.

The study represents only a portion of the potential that the intersection of LLMs operating in the realm of robotics unlock. Hopefully, more work is yet to come.